At VulnCon '26, we asked the audience a deceptively simple question: can you trust your vulnerability scanner? The honest answer is yes and no. And the "no" part deserves a lot more attention than most security teams give it.

Here is a recap of what she covered, why it matters, and what you can do about it today.

The Core Problem: Scanning Is Not a Solved Problem

Most teams operate under a comfortable assumption: run a scanner, get a list, fix the list. The reality is much messier.

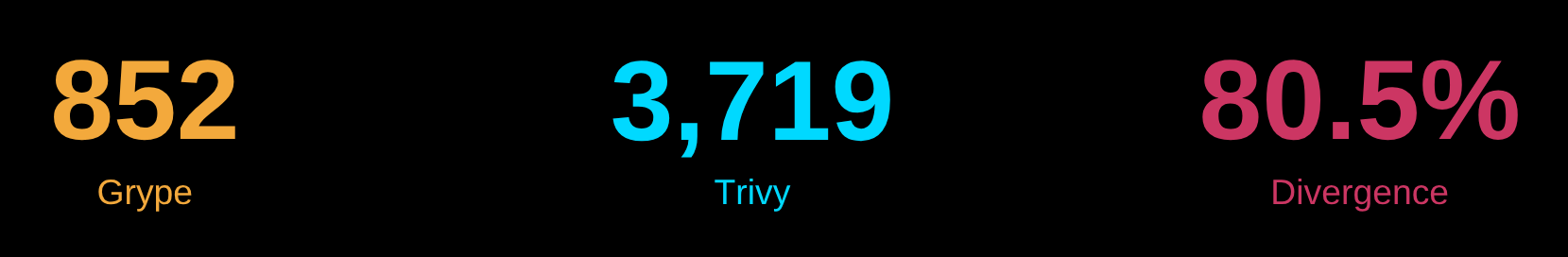

We ran two widely used open source scanners, Grype and Trivy, against the same Red Hat 8 image. The results were striking. Grype surfaced 852 CVEs. Trivy surfaced 3,709. That is an 80.5% divergence, and both scanners were doing exactly what they were designed to do.

Neither scanner is wrong, exactly. They are just making different logic decisions. And those decisions compound in ways that most engineers never trace back to their root cause.

How Matching Actually Works

Before you can understand why scanners disagree, you need to understand what they are actually doing under the hood.

The pipeline looks like this:

- The scanner reads your container or repository and builds a list of packages, often producing an SBOM (Software Bill of Materials) in the process.

- Each package is assigned an identifier, either a CPE (Common Platform Enumeration) or a PURL (Package URL), or sometimes just a name and vendor.

- That identifier is matched against a vulnerability database such as NVD, OSV, or ecosystem-specific security advisories.

- The output is a list of CVEs with scores and, sometimes, fix information.

The matching logic in step three is where everything diverges.

The Identifier Problem: CPEs vs. PURLs

CPEs: Designed for Commercial Software, Riddled with Gaps

CPEs are the identifier format used by NIST's National Vulnerability Database (NVD). They encode vendor, product, version, and other metadata. They are required for many compliance frameworks because NVD only uses them.

But there are serious structural problems:

- Vendor and product strings are freeform, so they can contain anything.

- Forks and distro repackaging routinely break vendor attribution. Lexi's favorite example: three distinct Google protobuf repositories all share the same CPE.

- There is no native ecosystem concept, and distribution backports are not modeled cleanly.

PURLs: Better, But Not Perfect Either

PURLs add the crucial concept of ecosystem, making them work naturally with NPM, PyPI, Maven, Cargo, and others. They are increasingly preferred in OSV and modern advisories like GHSA.

However, PURLs have their own issues. They are not widely adopted for commercial software. The qualifier fields after the version are completely unstandardized. Scanners, SBOM generators, and vulnerability databases all use those qualifiers differently, and that inconsistency is a major source of divergence.

The Data Source Problem: Where You Look Matters

The database your scanner queries shapes what it can find. Here is a quick landscape of the major sources:

NVD (NIST National Vulnerability Database): Manually curated, CPE-only, broad commercial coverage. The downside is delayed enrichment that can lag by weeks, months, or even years, plus inconsistent CVSS data.

CVE.org (Mitre CVE Program): Reserves IDs and often has product and vendor data before NVD catches up, but suffers from inconsistent CNA descriptions and slow upstream propagation.

OSV (Google's Open Source Vulnerability Database): Ecosystem-native, PURL-only, with fast community updates because ecosystems and advisories write directly to it. Coverage is uneven across ecosystems.

Vendor Advisories (GHSA, Red Hat, etc.): Most accurate for their own ecosystems. The catch is that they can disagree with NVD and formats vary widely.

Real Divergence, Real Examples

Here are three specific examples to show that the divergence is not random noise. It is structured.

Kernel Headers

Trivy surfaced 2,974 CVEs for the kernel headers package. Grype surfaced zero. Why? Kernel headers is a headers-only dev package linked to Linux Kernel, which carries enormous CVE volume. Grype applies a default suppression rule because many people believe that having kernel headers installed does not make you vulnerable to those Linux Kernel CVEs. Neither scanner is wrong. They just make different policy calls.

Setup Tools

Trivy flagged zero vulnerabilities. Grype flagged three. The reason: Trivy did not catalog these Python packages during the scan, so it could not look for CVEs against them. Grype did.

Tomcat Catalina

Trivy found 16 CVEs. Grype found zero. Here, Grype inferred the Maven Group ID from the directory path and got it wrong. Trivy looked it up in an internal Java index database and correctly identified the package. Same scanner, different package type, opposite problem.

The pattern holds throughout: no scanner gets it right every time. They are better in different circumstances.

The SBOM Generator and Format Problem

The trouble does not stop at the scanner. If you are scanning an SBOM directly, the tool that generated that SBOM shapes what the scanner can find.

We ran a mixing experiment with multiple SBOM generators and scanners. The headline finding: scanning a Syft-generated SBOM with Grype versus scanning a Trivy-generated SBOM with Grype produced a 66% difference in results.

Why? Syft injects additional metadata into its PURLs, including an upstream qualifier that links packages like vim-minimal back to vim. This allows Grype to find CVEs for both. Trivy does not include that qualifier, so Grype only looks for vim-minimal CVEs and finds nothing.

The SBOM generator is doing work that the scanner depends on. If you swap generators, you lose that work silently.

What this means for your toolchain:

- Match your generator to your scanner. Syft and Grype are built together by Anchore. Trivy generates and scans in one tool. Do not cross-pollinate naively.

- Upgrade your generator and scanner together. If one drifts behind the other, the metadata contract between them breaks.

- SBOM format matters too. In the experiments, Syft and Grype on CycloneDX produced significantly more results than the same tools on SPDX. You lose fidelity when the format does not carry the metadata your scanner expects.

Common Mistakes to Avoid

- Trusting a clean scan as proof of safety. Zero results can mean your software is clear, or it can mean the scanner missed something. Always verify which one.

- Cross-pollinating SBOM generators and scanners from different vendors. Metadata injected by one generator is invisible to a scanner that was not built to read it.

- Using severity scores without knowing their source. CVSS scores are submitted by CNAs, and different CNAs score the same vulnerability differently. Grype defaults to NVD scores. Trivy often uses the vendor's score, such as Red Hat's, which reflects vendor-specific mitigations. If you are gating on severity thresholds, this distinction changes which policies you violate.

- Treating "no fix version" and "fix version unavailable" as the same thing. One means you need to act now through mitigations or risk acceptance. The other means you can wait. Your team's time depends on telling these apart.

- Adding more scanners to get better coverage without understanding what each one adds. More scanners can mean more noise, not more signal. The right question is which scanner fits this package type, not which scanner finds the most CVEs.

Verification Workflow: What to Do When Results Do Not Add Up

When you want to validate whether a CVE is a false positive, we recommends this sequence:

- Go to the vendor advisory first. For Red Hat packages, that means the Red Hat Security Advisory. These tend to be the closest source of truth. Check the name, epoch, version, release, and architecture precisely.

- Confirm the impacted version is present and that a fixed version is available for your system. Trivy sometimes reports a fixed version that is not available for the image stream you are running. Verify before you chase it.

- Cross-check with other data sources. Not being in OSV does not mean the vulnerability is not real. It may just mean OSV has not picked it up yet.

- If it is truly a false positive, suppress it with documented evidence. Do not just dismiss it. Write down why.

- Generalize the rule. One well-documented suppression rule applied at fleet scale can eliminate thousands of manual reviews. Grype's kernel headers suppression is the canonical example of this done well.

Quick Win: One Action You Can Take Today

Pull up an SBOM you are currently scanning. Check the tool metadata field to see which generator produced it. Then verify that the scanner you are using to scan that SBOM is from the same vendor or toolchain.

If they do not match, you may be losing significant findings or generating false positives silently. Fixing that one mismatch is the highest-leverage change most teams can make without adding any new tools.

Conclusion

Vulnerability matching is not a problem you solve by picking the right scanner. It is a problem you manage by understanding where your tools agree, where they disagree, and why.

CPEs and PURLs and vulnerability databases each introduce their own failure modes. Severity labels can reflect very different assumptions depending on the CNA that filed them. Two zeros can mean opposite things. And running a scanner is not the same thing as understanding your attack surface.

The goal is not to distrust your tools. It is to use them with enough context to interpret their output correctly.

The Manifest Platform is built around this challenge, helping teams get more signal out of their vulnerability data without drowning in noise. If you want to understand how Manifest approaches SBOM analysis and vulnerability verification at scale, reach out to the team or explore what Manifest Product Security can do for your organization.

Matching is hard. Knowing it is hard is the first step to doing it well.